The escalating tensions between the United States, Israel, and Iran have once again brought one of the most controversial areas of modern technology into focus: the use of artificial intelligence in warfare.

Just days before a joint military operation in late February, the U.S. government cut ties with a key AI technology provider, exposing deep disagreements over how such systems should be used in military contexts. Meanwhile, experts gathered in Geneva to debate autonomous weapons as part of ongoing efforts to establish international regulations.

Yet, analysts warn that technological progress is outpacing diplomacy. Advances in AI are rapidly reshaping the battlefield, while global governance struggles to keep up.

AI is already deeply embedded in U.S. military operations. Systems powered by large language models are used for intelligence analysis, logistics, and real-time decision support.

Questions over AI “precision”

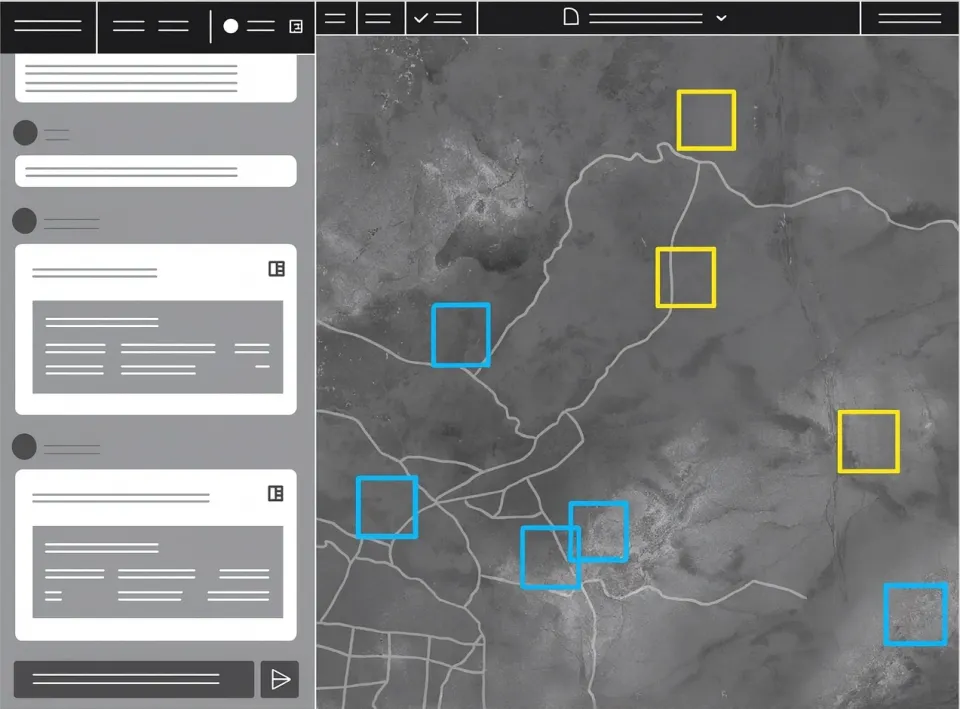

Platforms such as the Maven Smart System are designed to accelerate operations by identifying and prioritizing targets. While proponents argue that AI can improve accuracy, evidence from recent conflicts raises doubts.

Experts caution that there is no clear proof that AI reduces civilian casualties — and some data suggests the opposite may be true.

At the same time, the prospect of fully autonomous weapons operating without human oversight continues to spark intense ethical and legal concerns.

Tech companies and military tensions

The debate also extends to partnerships between governments and AI companies. The fallout with Anthropic highlighted growing tensions over acceptable use cases for AI in defense.

Following this, the U.S. turned to OpenAI, with agreements that reportedly restrict the use of its technology for mass surveillance or fully autonomous weapons. However, negotiations remain fluid, and the broader framework is still uncertain.

Ethical concerns and risks

Researchers and industry employees alike have raised alarms about potential misuse. Studies suggest that replacing human judgment with AI systems could increase the risk of unintended escalation in conflicts.

The challenge of regulation

Efforts to establish international rules continue, but progress remains slow. Major military powers are reluctant to accept strict limitations, and defining what constitutes an autonomous AI weapon remains a complex task.

As experts note, AI is already deeply integrated into military systems — making meaningful regulation not only urgent, but exceptionally difficult.